UX is about data.

Data can make or break a product or experience. Understanding how your users think and what your users need is key to creating a good design.

Products and Projects

Window To The World

Window To The World is a project that Alan Underwood and I created in May 2022. It is a new kind of AI, something we call “Emergent Intelligence”. Rather than using a single model that is designed to sort or classify an item, we use multiple models together to create a “Preference Engine”. This is a global model that takes data from the member models, combines it with user interaction, and over time develops a profile for that user that reflects that user’s desires based on the actions that they have taken.

Window is designed to take a random stream of images (currently we use Imgur because it is easily accessible and its URLs follow a pattern) and display those images to the user. The user sees several image thumbnails on the screen at a time. These images fade over time and are replaced with new ones. The user has three actions: They may click on a non-faded image to delete it, allowing it to be immediately replaced with a new image; they may click on a fading image to rescue it, allowing it to remain on the screen for longer; or they may zoom in on an image, allowing for a better look. Every time the user takes an action, the Preference Engine records the delete, rescue, or zoom.

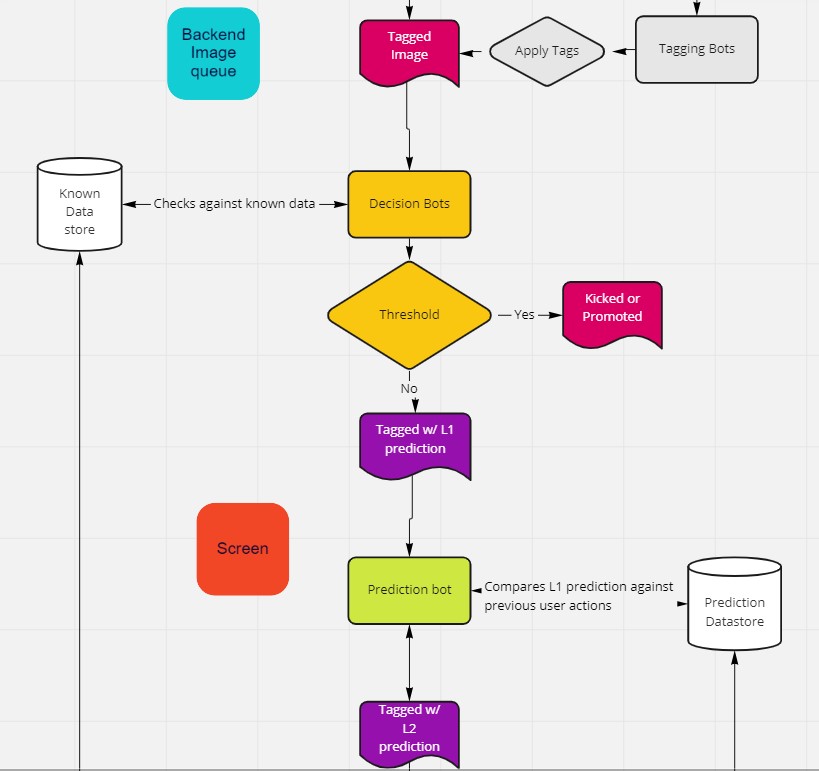

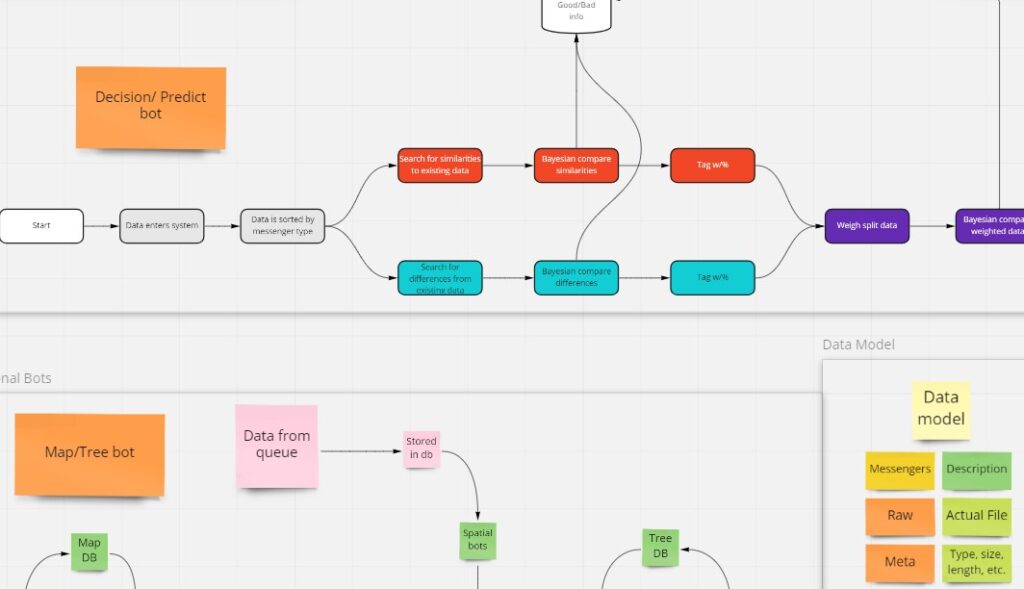

Window works by applying multiple models to each image that is shown on the screen. We use things like object and face detectors, info about the size, shape, color distribution, and entropy of the file, and a number of other models. Each model tags the file with the information it produces, and a confidence value (if appropriate). The cognitive model makes a prediction about what we think the user will do with that image and tags the file with that prediction. We then track the image as it moves through the screen queue. Each image is added at the bottom-right of the screen, so the image that survives the longest eventually makes it to the top-left. We also time it to see how long the image survives. We use this, along with the action the user takes (delete, rescue, zoom), to validate any predictions we make about that image. If an action is taken, we dump all of that info into a database to use later.

Alan is responsible for Window’s architecture, letting all the individual bots and models talk to each other, while I created the algorithms that make the system work so that the data that is gathered can be turned into a coherent model about the user’s inner state. The goal is to create a model that can get resolution down to the user’s unconscious desires. We want to make predictions based on decisions the user doesn’t know that they are making. Our model should be good enough that by the time the user realizes that Window is making decisions for them, we are already making decisions they want us to make.

Emergent Intelligence is something that Alan and I have thought about for quite a while. Window is an extension of the work we did together in $O$ but applied to a more pure artificial intelligence problem. We call this “Emergent Intelligence” because none of the individual bots are that smart. Sure, each one is good enough to do their own little piece, but none of them can predict anything about the user working on their own. Only when our architecture lets them talk to each other and the algorithm uses the data they have together does the intelligence necessary to make predictions emerge.

While the current implementation of Window is little more than a toy and proof of concept, the theory behind the model has several major differences from many current AI implementations.

One is that it is data agnostic. Window doesn’t care what kind of data gets fed into it. As long as we can analyze that data in sufficient detail, we can make predictions about it. We are using image data because it is free and readily available, but it could just as easily be text, audio, or anything else. The model doesn’t care, as long as it can analyze it. The second is that we can start working with very little data. Our model is designed to start making predictions immediately. It doesn’t matter how much or how little data it has about the user. Because of the algorithms it uses, we have no minimum data requirements. Our early predictions may be bad, but we can make them right away.

The third is that our architecture is easy to maintain, easy to upgrade, and portable. Window is completely modular. We have designed it to work in pieces. Every major part can be replaced on the fly. If we decide that we don’t like one of the models we are using, it can be swapped out. If a better model comes along, we can swap that in. If our database crashes, we can connect to a different one. The whole thing can run in the cloud, or it can run in a box locally, or it can be mixed.

In short, Window is not just a new way of thinking about AI, it’s a new way of building and running it too. While we like to joke that Window is “Three Bayesian Algorithms in a Trenchcoat”, the things that it can do are no laughing matter.

$O$

$O$ is a tech-based non-profit that Alan and I co-founded in 2018 intended to help unhoused individuals find support. I have acted in several roles at this company. As well as being co-founder, I am the Head of Research and Development and the current Executive Director.

$O$ is difficult to document because we specifically designed it to be a “stealth project”. Homeless people don’t generally have access to computers or the internet, so it has no front end. Most of the work involved was to minimize the amount of interaction involved. The AI work was designed so that it would do as much of the heavy lifting as possible, allowing the staff to think about empathy and the unhoused individuals to just answer questions.

This meant that most of the work with $O$ focused on the architecture and algorithms that controlled the bots so that the whole process would be as seamless as possible. The goal was that the unhoused individuals wouldn’t realize that Anna-bot wasn’t a real person. We took pains to make sure that the architecture of the entire system was open, transparent, and interruptable. We couldn’t have a black box. The staff had to be able to watch everything that the AI was doing, and be able to step in at any moment.

Again, Alan focused on the architecture and development while I primarily worked on the algorithms and user experience side of things. The architecture that eventually turned into Window was developed here in $O$: multiple “dumb” bots talking to each other to create an effect greater than the sum of their parts. My job was to make sure that the bots asked the right questions, and didn’t act dumb while they did it. To that end, I focused primarily on Anna-bot and Slotbot.

Anna-bot

Anna-bot is a chatbot designed to gather information from unhoused individuals. She consists of several bots working together in a proprietary emergent AI system. Anna-bot is run on Discord using individual bots designed in Botkit.

Anna is designed to mimic human speech patterns as closely as possible. This involved several rounds of testing both in-house and with individuals recruited from Facebook. I designed the survey we used to gather information from the users, recruited the users, analyzed the data, and helped tune Anna through multiple rounds of testing.

Slotbot

Slotbot is one of the sub-bots designed to support Anna-bot. Slotbot’s purpose is to pick the next question that Anna asks users. Slotbot uses a sophisticated algorithm that takes into account the user’s speed of answer, the valence of the questions and answers that the user has given thus far, and the value of the questions that the user has answered thus far. Based on these inputs, the algorithm generates the next question Anna should ask.

Slotbot was tuned through multiple rounds of surveys, which I also designed, recruited users for, and analyzed the data.

Unhoused Support Survey

The purpose of Anna-bot and Slotbot was to ask questions from the Unhoused Support Survey. The Unhoused Support Survey is a survey developed to find support for unhoused individuals. $O$ gathered the questions needed to qualify for as many agencies that supported unhoused individuals as we could find.

Next, we collated the questions. Each question was then given a value based on how many times it showed up in the paperwork. “What is your name” had very high value because it showed up everywhere. “Were you injured in the Vietnam War” was a low-value question because only a few forms asked it.

Those questions are then fed into Slotbot and Anna-bot. Anna-bot interfaces with end-users through text messages. Anna-bot asks the questions. Based on the data received, Slotbot feeds Anna as many high-value questions as possible (based on its algorithm) before the user disengages with the process. We then provide the user with the names of the agencies where they might qualify for aid and provide links to paperwork that was already filled out as much as we could.

The “secret sauce” is in the algorithms that determine how Anna speaks to the users and what questions Slotbot feeds her. Both of these things are designed (through thorough and still-ongoing research) to be as human-like (in Anna’s case) and efficient at getting high-value questions answered (in Slotbot’s case) as possible.

The Unhoused Data Project

Getting accurate data about unhoused individuals is a hard problem by its very nature. Aside from providing aid to these individuals, the other reason $O$ exists is to get accurate information about unhoused individuals.

$O$ is two ongoing longitudinal studies designed to gather as much data as possible about unhoused individuals. Part of my job is creating a survey (which is constantly being tuned) to efficiently gather data. We want to know the stories of the unhoused individuals we help. One study is purely quantitative. How many people are out there? Where are they? How long have they been unhoused? etc. We wanted to get those hard numbers that are so hard to gather.

The other is qualitative. Every person we help is a case study. We get their stories. We go beyond the numbers. While my job as Head Researcher at $O$ is about making our products better, it’s also about getting to know every person we talk to. My job is to listen. To hear what people say. And I do it every time someone knocks on our door. Sometimes that’s all it takes to change a life.

That’s what I do as a researcher. Whether it’s for product design or charity. I listen and hear and try to understand. Because the next life I change might be my own.

NotecardsAndString.com

Alan and I worked on a website together called NotecardsAndString.com in 2012. We wanted to create something that mimicked the way that Alan, who was an information architect at the time, did his designing. He would literally get notecards and string and pin them up all over the room or conference table or wherever he happened to be.

While productivity apps and websites like this are now common (we both use Miro, which is essentially a professional version of NotecardsAndString), this was a new thing back then. So we took a page from skeuomorphic design. The website is a corkboard with various cards that can be attached with string and moved around. Alan was the developer, with the entire thing done in Javascript.

We had plans to include containers (for further productivity) and to gamify the site with badges and other interactables (since we discovered that our main demographic at the time was housewives), but due to various job- and life-related things, the project was abandoned. By the time we came back to it, there were other, better productivity tools already out there.

This was one of my first exposures to design thinking and skeuomorphic design. I have always been a fan of the idea that form is function, so a site that literally was just notecards and string, that people could pick up without needing instruction of any kind and use was something I was eager to be a part of.

Game-Based Stealth Therapy for Depression

My Master’s thesis was designing a prototype for a game-based therapy for depression. With my background in psychology and specialization in game design, I decided to follow up on a project that I started in a Positive Psychology class during my undergraduate studies. I wanted to create a form of therapy for individuals who were therapy-averse.

A problem faced by sufferers of depression is that they are often reluctant, or outright hostile to attempts to help them. Often these issues occur at times when they need help the most, such as after a failed suicide attempt. I wanted to create a form of therapy that would be able to reach this audience: the ones that were least likely to get help on their own, yet needed it the most.

To do so, I integrated techniques from positive psychology and operant conditioning with game design to create a form of therapy that looks, acts, and plays like a game. It uses operant conditioning to reinforce eight key behaviors that depressed individuals often avoid while using the principles of altruism from positive psychology in the plot and theme to help create a positive mood. While working on this, I used a persona to help me target my audience, did surveys and storyboards during iterative prototyping, and user testing when I was nearing the end of the process.

In the end, I was only able to create an interactive story using Twine to demonstrate the principles of my theory. I am not a programmer and the technical challenges of creating something more were too great to overcome. I was also unable to get approval to do testing with an at-risk audience due to the ethics and risks involved, but I did write up several preliminary experimental designs to test the therapy’s effectiveness in the paper that accompanied the prototype.